Looking to the Brain for Next-Gen AI

With the explosive advent of artificial intelligence (AI), from impressively articulate conversational agents to increasingly autonomous robots of various embodiments, it is easy to forget the still unparalleled capability and efficiency of the natural intelligence that the human brain achieves. The brain is responsible for simultaneously regulating essential processes like breathing and blood circulation, controlling numerous functions such as locomotion and multi-modal sensing, and maintaining complex cognitive processes that drive thinking and decision-making. Remarkably, this is accomplished at an estimated power consumption of 20W, similar to some lightbulbs 1, while comparable, digital computers operate at power inputs that are several orders of magnitude higher, particularly when AI algorithms attempt to emulate a subset of these capabilities. Evidenced by neuroscientific studies, this vastly superior performance-to-efficiency ratio begs the question: should we be looking more to the brain to explore the potential in biomimetic computation and algorithms, especially in the design of intelligent and efficient robotic agents?

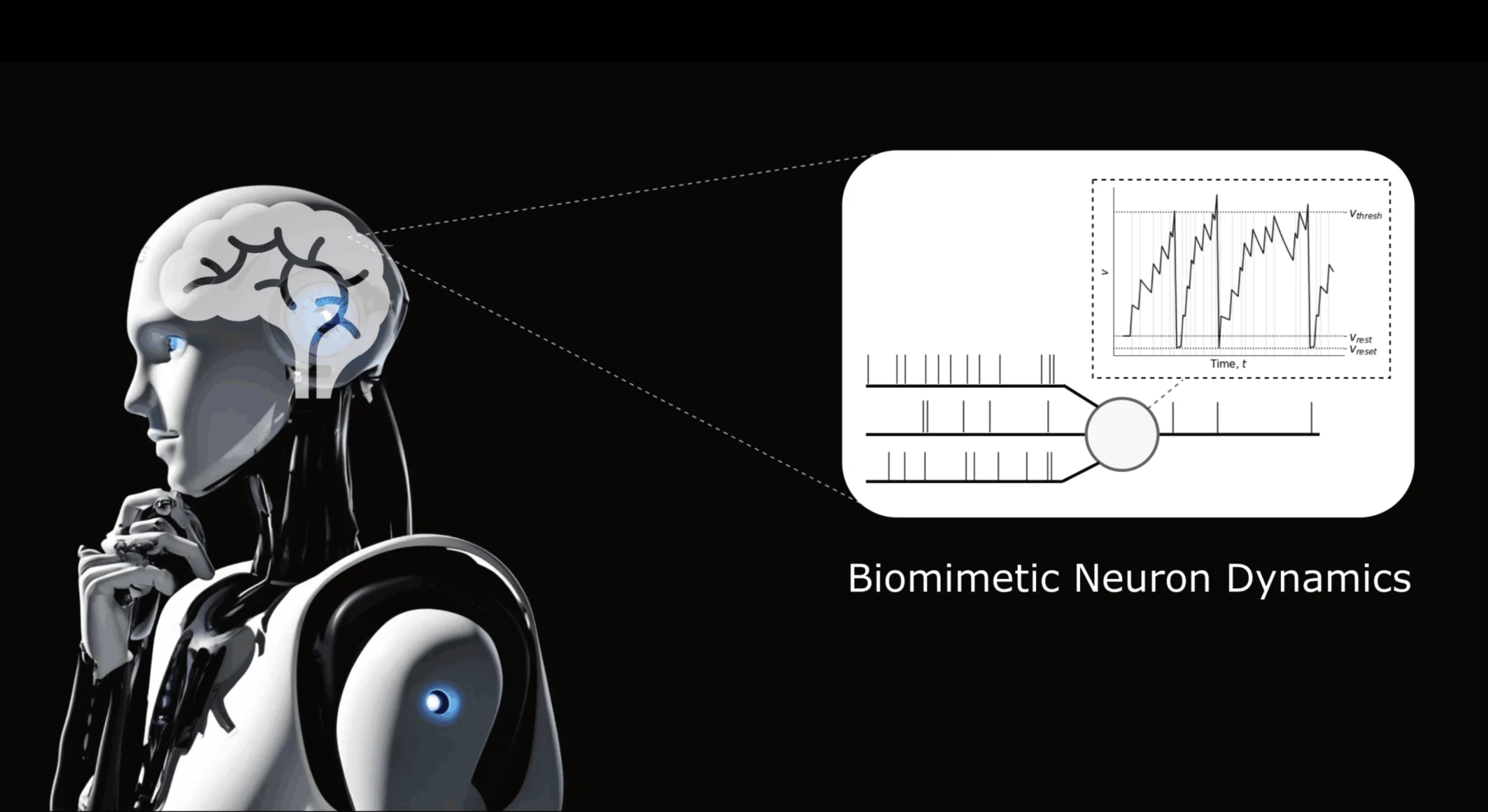

A promising outcome of research into this question is neuromorphic computing, which aims to mimic the fundamental neural architectures and characteristics of the brain in silico, and could give rise to the next generation of AI 2. This novel computational paradigm was first presented in the 1980s by Caltech researcher Carver Mead 3, who suggested analogies between neuronal dynamics and the physics of sub-threshold operation in transistors: a fundamental electronic component. Unlike conventional, digital computers, which implement the standard von Neumann architecture, neuromorphic computers are characterized by asynchronously-operating processing units that are not driven by a central clock and the absence of a separation between processing and memory modules, since memory is inherently maintained within the processing units. In essence, these units are neurons that independently maintain an internal, time-varying activation signal and fire a “spike” when sufficiently excited, leading to computation through spatio-temporally distributed sequences of binary signals flowing through a network of spiking neurons (more on those soon), just as observed in the nervous system. This principle has spawned brain-inspired neural network models such as spiking neural networks, processors such as dedicated neuromorphic chips, and sensors such as event cameras.

Why Neuromorphic Computing?

Apart from exploring the unknown potential in replicating the low-level features of biological intelligence, neuromorphic computing offers practical advantages. Most notably, empirical evidence from ongoing research points to significant gains in energy efficiency and speed for inference and learning in some AI applications. For example, studies on neuromorphic variants of an autonomous quadrotor navigation planner 4, a transformer-based general, pre-trained (GPT) model 5, and a robot manipulator controller 6 have reported significantly lower energy consumptions (by factors of approximately 3, 33, and 100, respectively). In various classical computation problems, neuromorphic implementations have similarly demonstrated promisingly lower latencies and higher energy efficiency 7. The observed low-power and low-latency properties of neuro-processors can be explained by their key characteristics: inherently asynchronous computation, event-based computation, and in-memory processing. Uniquely, neuromorphic computers may be the key to addressing the longstanding dilemma of the von Neumann bottleneck: a fundamental limitation in conventional CPU architectures, where the separation of memory and processing can lead to the time that is needed to transfer data from memory to processor exceeding actual processing time.

Therefore, brain-like computation could benefit various applications including autonomous vehicles, wearable devices, IoT sensors, and robotic systems. A particularly relevant application in healthcare and biomedicine is neuroprosthetic devices: wearable prosthetics that are designed to be controllable by users through a neural interface and biological signals that are obtained from electromyography (EMG) or electroencephalography (EEG). As these signals are inherently based on neuronal spiking activity, neuromorphic algorithms and hardware present an exceptionally suitable neural processing approach. In addition, advanced neuroprostheses must be relatively light-weight and energy-efficient to be commercially viable, a challenging problem that neuromorphic methods could be a key solution for.

Spiking Neural Networks: A Neuromorphic Algorithm

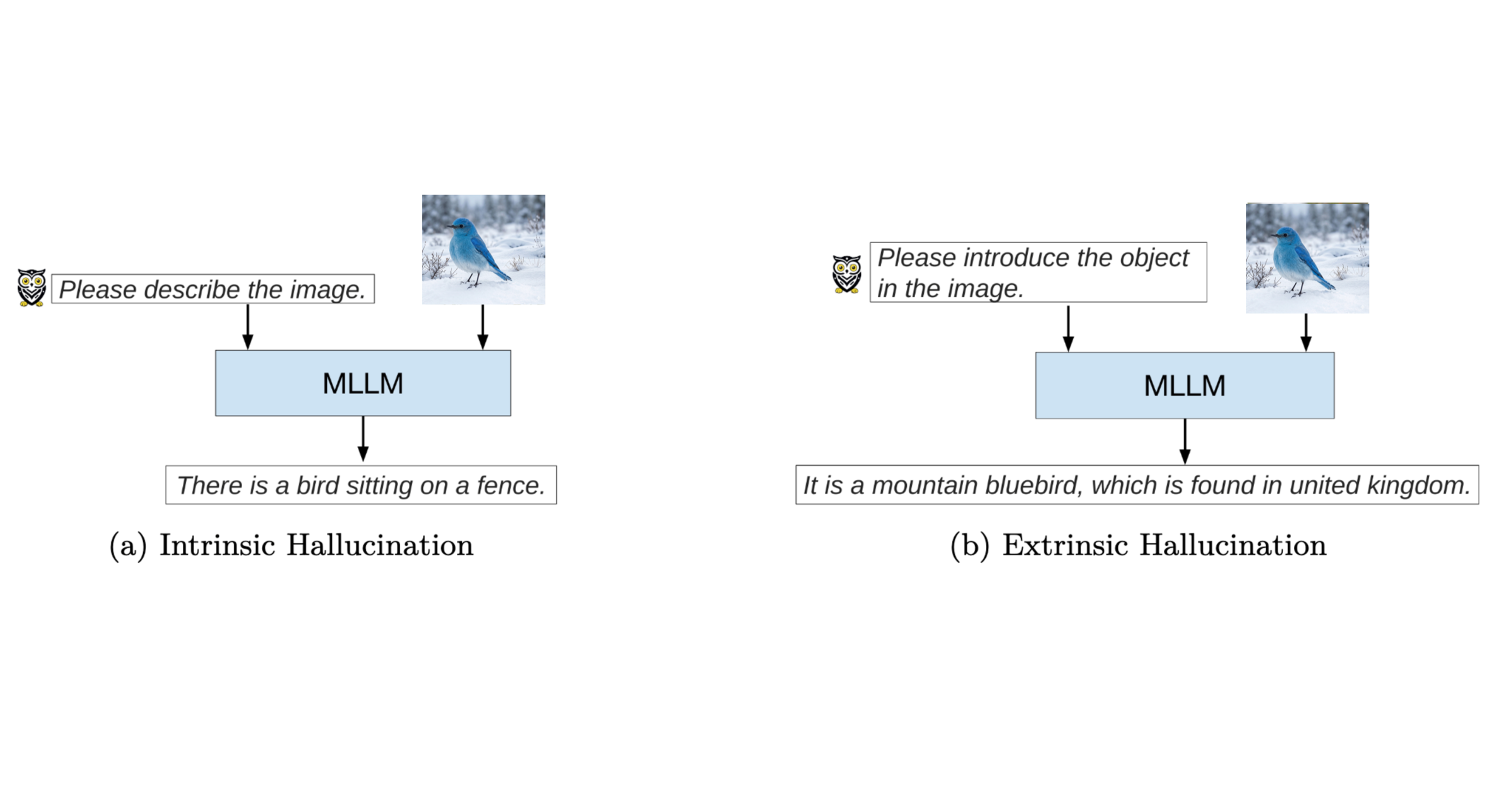

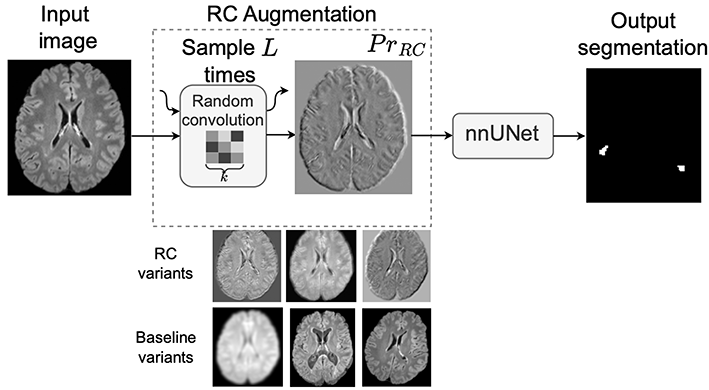

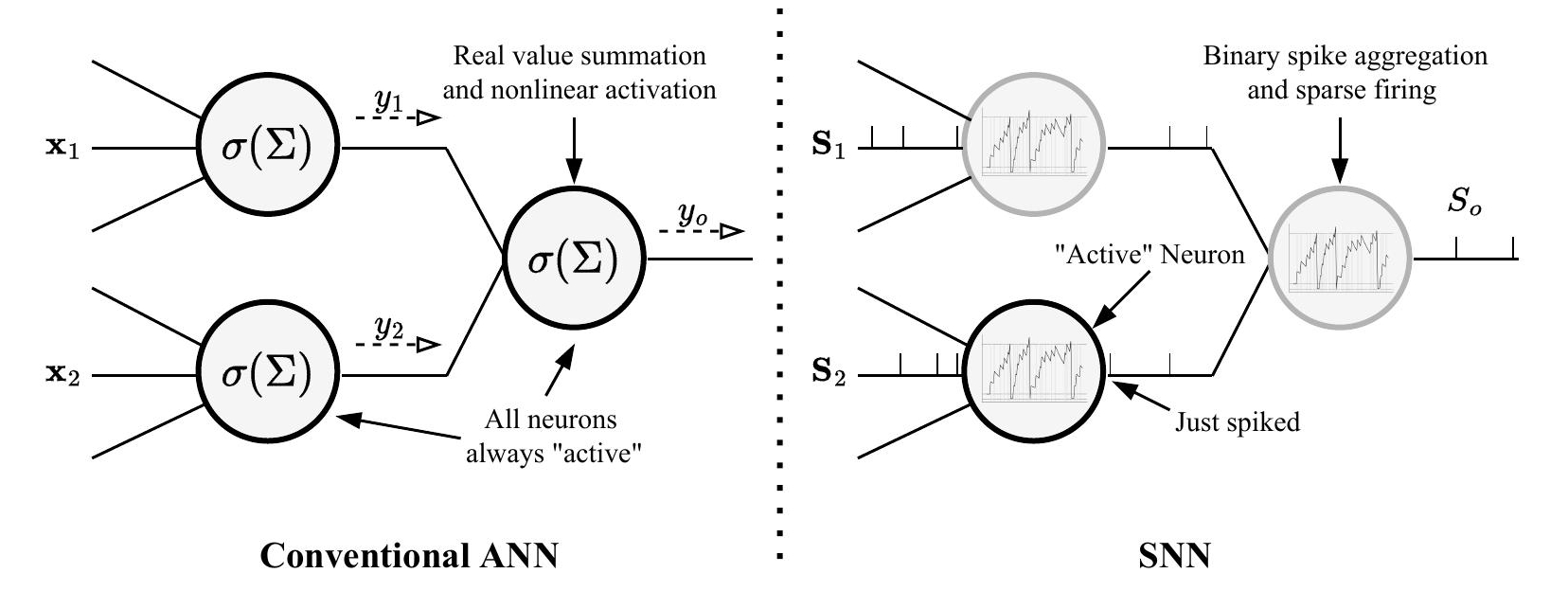

Spiking neural networks (SNNs): the most demonstrative form of neuromorphic computing and the so-called third generation of neural networks, are biologically-plausible alternatives to conventional artificial neural networks (ANNs) that are designed to replicate the complex neuronal dynamics observed in the brain. While ANN units synchronously emit real-valued signals (a sum of inputs followed by a nonlinear transformation), spiking neurons asynchronously communicate via trains of sparse, distinctly-timed spikes. Input spikes contribute to a neuron’s decaying internal aggregate of past inputs: its membrane potential, which is an approximation of ionic concentrations across a nerve cell’s membrane. When a threshold is exceeded, the neuron fires a single spike (or action potential) and resets its potential. Individual neurons are therefore active only in response to a significant aggregate of recent inputs. Information can be contained in the relative timings of spikes or average spiking rates, the latter being a simplification that is equivalent to the real-valued outputs of conventional artificial neurons. In essence, the time-varying membrane potential inherent to every neuron and the independent spiking latencies create an additional temporal dimension in SNN signals.

Several mathematical approximations and abstractions of neuronal dynamics at different levels of fidelity have produced a variety of spiking neuron models. The most commonly used and simplistic is the leaky integrate-and-fire (LIF) model, which implements the aforementioned characteristics through a simple RC electronic circuit. In an approximate order of increasing complexity, notable alternatives include the Spike Response Model (SRM), Izhikevich model, and Hodgkin-and-Huxley model. Despite being the earliest, the latter is the most computationally intensive due to its detailed modelling of sodium and potassium ionic flows across neuronal membranes in terms of differential equations describing a capacitive circuit. The success of the LIF model indicates a trade-off between biological fidelity and computational feasibility, particularly in the context of resource-constrained hardware, which means that we are still limited by hardware in our pursuit of faithful emulations of the nervous system.

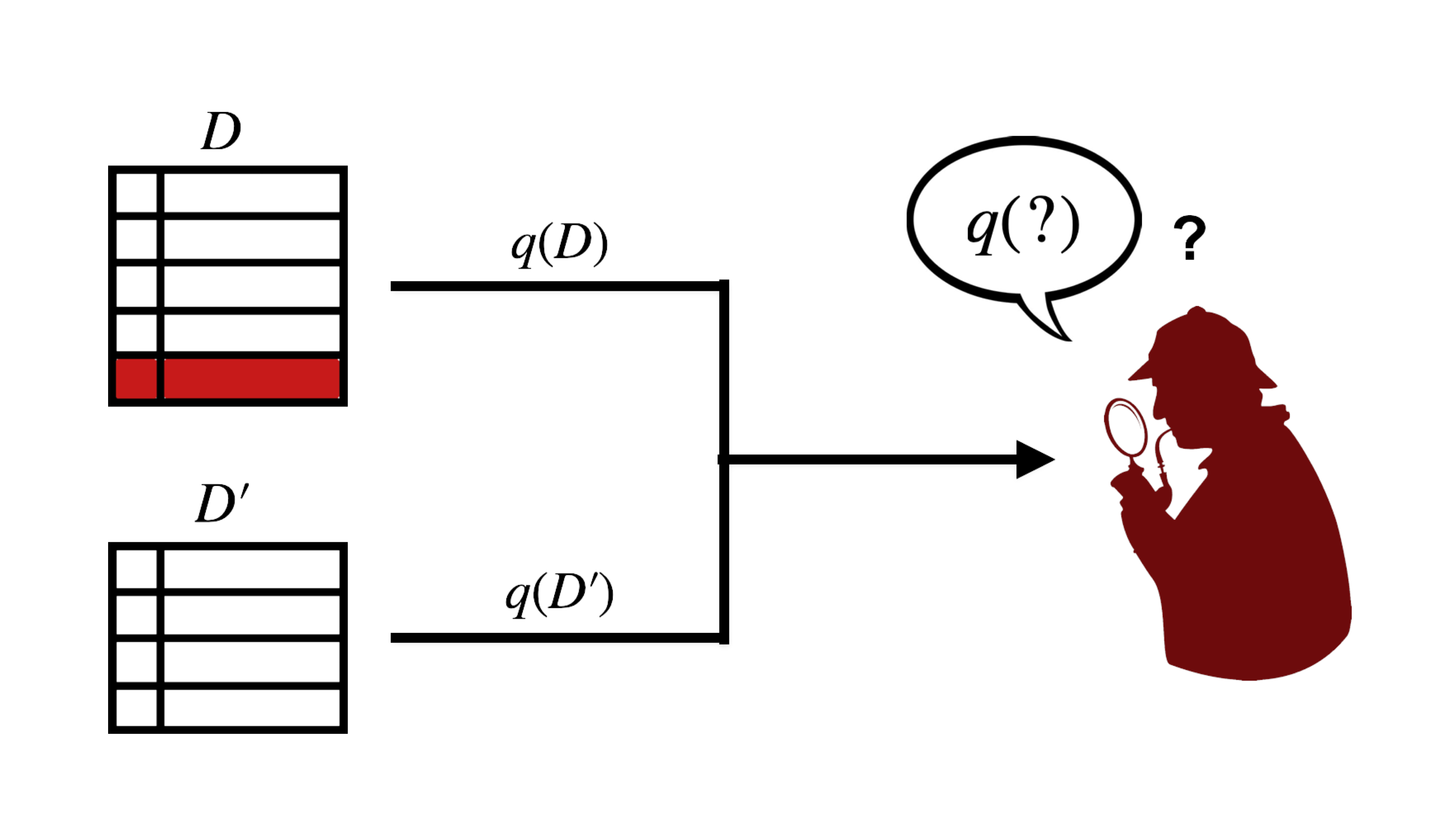

In the spirit of biomimicry and due to the unique properties of spiking data, learning in SNNs is realized through a novel set of algorithms. Importantly, spike trains resemble a sequence of Dirac delta functions: a non-differentiable signal. This renders the standard backpropagation algorithm inapplicable, at least directly; in addition, the general belief that the implementation of global gradient descent in the brain is implausible means that it is an undesirable approach when biological fidelity is prioritized. Instead, local learning rules such as Hebbian learning are the most common option, the most prominent of which is the spike-timing-dependent plasticity (STDP) rule, in which the relative pre- and post-synaptic spike timings govern synaptic weight adjustments between pairs of local neurons. Nevertheless, efforts are still being directed towards replicating the success of backpropagation learning, such as by constructing surrogate gradient signals from spiking activity and capitalizing on well-established deep learning methods.

Event Cameras: A Neuromorphic Sensor

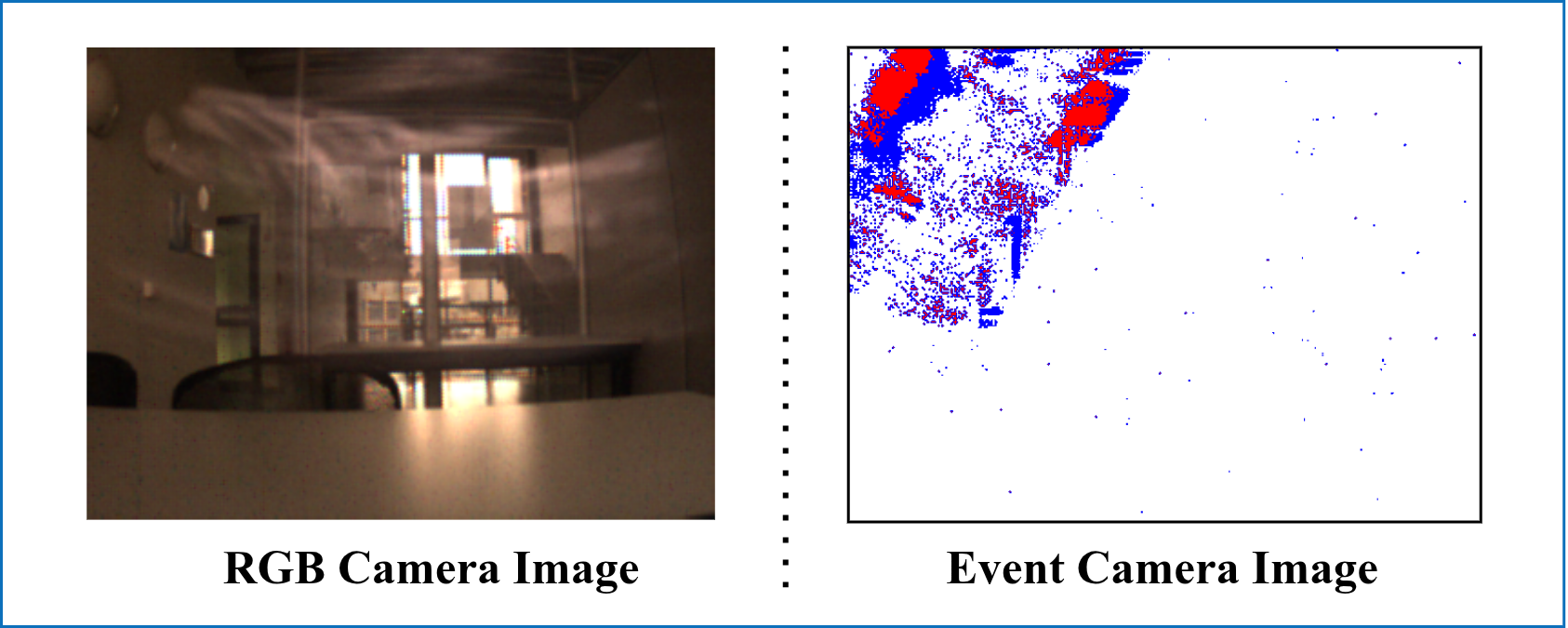

Event>Event cameras (ECs) are an example of neuromorphic sensors. Originally called silicon retinas, ECs imitate the neural architectures of biological retinas in analog very-large-scale integration (VLSI) circuits; in particular, ECs exclusively record per-pixel events at which the change in intensity crosses a threshold, mimicking retinal photoreceptor cells. Therefore, they only capture significant intensity changes, often due to motion. In contrast, conventional, frame-based camera pixels synchronously and continuously transmit absolute intensities, much of which is often redundant information. This external clock-driven data acquisition naturally leads to redundant image information and the potential loss of inter-frame information, while event-based vision selectively acquires data based on scene dynamics. As a result, ECs offer lower power consumption, lower transmission latencies, higher dynamic ranges, and higher robustness to motion blur (illustrated in the image of a fast-moving object above, simultaneously captured by a color camera, where it is barely visible, and an event camera).

Using an address event representation (AER), EC pixel arrays provide streams of spatio-temporally-registered events, which can be represented as a tuple of pixel position, emission timestamp, and polarity: . Each pixel is independently monitored to compute a measure of the change in light intensity, e.g. the difference in raw, instantaneous intensities, :

When this difference crosses threshold , event is emitted and designated an ON (+1) or OFF (-1) event:

ECs are often most useful in vision-based applications that require high reaction speeds and impose computational resource constraints, such as aerial robots. In addition, the similarity between event vision data and spiking neuron data (both sparse, binary signals) creates a natural synergy between ECs and SNNs, as shown in 8, in which obstacle avoidance during robot manipulation was realized through a neuromorphic perception pipeline that utilizes both components.

Neuromorphic Processors

Neuromorphic processors are the specialized computers that can implement the neuronal dynamics that we have been discussing so far and on which the advantages of neuromorphic computing are fully realized and have been validated. Fundamentally, these processors model membrane potential evolution using voltages across capacitors or transistor sub-/supra-threshold dynamics, and transfer spikes via an AER, similar to ECs. While this technology is still in active development and most processors are not commercially available and only intended for research purposes, the growing interest in neuromorphic computing has led to a wide array of pioneering neuro-processors. Among the most prominent are Intel Loihi, IBM’s TrueNorth, Stanford’s Neurogrid, Brain-ScaleS, SpiNNaker, DYNAP, PARCA, Braindrop, ODIN, and Deepsouth.

Outlook: Towards Biomimetic, Efficient AI

In view of the extraordinary, specialized accomplishments of modern AI still falling short of the more general, natural intelligence, while imposing soaring energy consumption rates, neuromorphic computing offers a promising avenue for future research. By considering the exemplary model of the brain and mimicking its biological computation, neuromorphic methods could move us closer to the effective, energy-efficient, robust, and adaptive real-time capabilities that are observed in nature in general, which can be transformative for robotics. Researchers hope to advance towards this goal through the guiding principles of:

- asynchronous event-driven communication.

- spike-based neural processing.

- analog neuronal dynamics.

- local synaptic adjustments.

This approach serves an interesting dual purpose: enhancing AI systems with lessons from neuroscientific research, and advancing our understanding of the brain by experimenting with neurologically-inspired platforms. At the risk of premature conjecture, it is tempting to wonder: could this novel computational approach be the key to a breakthrough in AI for more sustainable and general intelligence?

Footnotes

- Suggested reading: a journal paper on neuromorphic perception for obstacle avoidance in robot manipualtion that provides a deeper background on neuromorphic computing, which was used as a reference for much of this post 8.

References

1. Drubach, D. (2000). The brain explained. Pearson.

2. Boosting AI with neuromorphic computing. Nat Comput Sci 5, 1–2 (2025).

3. Mead, C. (2002). Neuromorphic electronic systems. Proceedings of the IEEE, 78(10), 1629-1636.

4. Sanyal, S., Manna, R. K., & Roy, K. (2024). EV-planner: Energy-efficient robot navigation via event-based physics-guided neuromorphic planner. IEEE Robotics and Automation Letters, 9(3), 2080-2087.

5. Zhu, R. J., Zhao, Q., Li, G., & Eshraghian, J. K. (2023). Spikegpt: Generative pre-trained language model with spiking neural networks. arXiv preprint arXiv:2302.13939.

6. DeWolf, T., Patel, K., Jaworski, P., Leontie, R., Hays, J., & Eliasmith, C. (2023). Neuromorphic control of a simulated 7-DOF arm using Loihi. Neuromorphic Computing and Engineering, 3(1), 014007.

7. Davies, M., Wild, A., Orchard, G., Sandamirskaya, Y., Guerra, G. A. F., Joshi, P., ... & Risbud, S. R. (2021). Advancing neuromorphic computing with loihi: A survey of results and outlook. Proceedings of the IEEE, 109(5), 911-934.

8. Abdelrahman, A., Valdenegro-Toro, M., Bennewitz, M., & Plöger, P. G. (2025). A Neuromorphic Approach to Obstacle Avoidance in Robot Manipulation. The International Journal of Robotics Research, 44(5), 768-804.